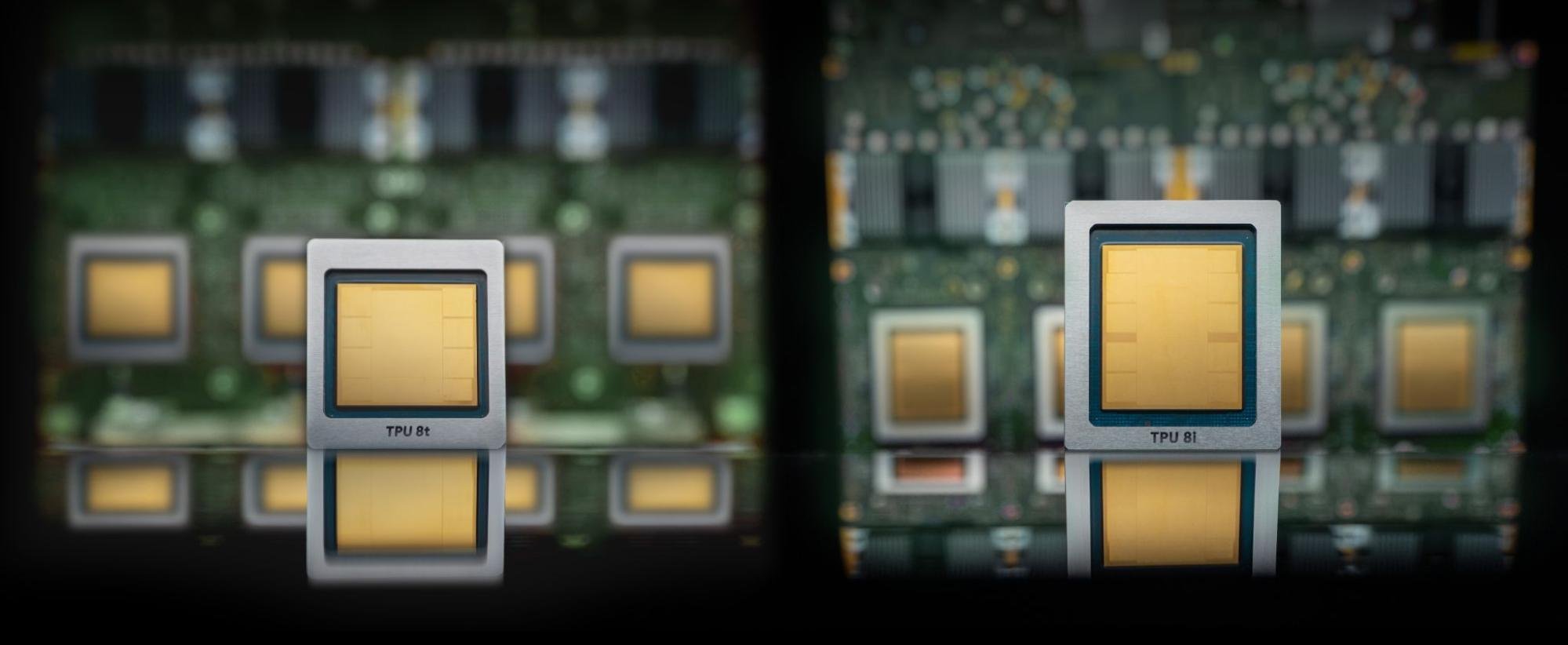

Google Announces TPU v8t Sunfish and TPU v8i Zebrafish

The issue is no longer demand alone; it is whether the surrounding infrastructure is ready.

- StorageReview reported a development that could affect hyperscalers & cloud planning.

- The practical issue is whether demand can be converted into reliable capacity on schedule.

- Watch execution details, customer commitments, and any bottlenecks around power, cooling, silicon, or permitting.

StorageReview reported: The TensorCore configuration is the other major departure. Where v8t uses a single TensorCore at 12.6 PFLOPS, v8i splits compute across two TensorCores at a combined 10.1 PFLOPS. Lower peak throughput sounds like a downgrade until you consider what inference actually looks like at the chip level. Training workloads are batch-dominated: large matmuls amortize fixed overhead, and a single large MXU can sustain near-peak utilization. Inference decoding is the opposite. Batch sizes are small, per-token compute windows are short, and the chip spends a material fraction of its time on collectives, sampling, and routing rather than pure matmul. A single large engine stalls during those irregular gaps. Splitting into two TensorCores lets v8i overlap compute phases more effectively, with each TensorCore fed by its own four directly attached HBM stacks, totaling 288 GB at 8.6 TB/s across the package. The result is higher sustained utilization at the batch sizes that actually run in interactive serving. At the scale-up domain level, the 1,024 active chips in a Boardfly pod aggregate to roughly 295 TB of HBM, 384 GB of on-chip SRAM, and 10.3 EFLOPS of FP4 compute. The SRAM number is the one that matters most for inference: 384 GB of on-chip cache across the domain is enough to hold substantial KV state without touching HBM, which is what makes long-context serving at low latency viable. T.

The important part is what the report says about cloud infrastructure as a working system, not just as a demand story. The constraint is not just chip supply. Advanced compute depends on packaging, memory, networking, power delivery, and the ability to land systems inside facilities that can actually run them at high utilization.

That is the reason the development deserves attention beyond the immediate headline. The underappreciated variable is deployment readiness across networking, power, and packaging, not just chip availability.

That matters for buyers because the useful capacity is the installed, cooled, powered cluster, not the purchase order. It also matters for suppliers because component shortages can shift bargaining power quickly across the stack.

The financial question is whether this improves pricing power, secures scarce capacity, or exposes execution risk that is still being discounted, the operating question is procurement timing, facility readiness, power access, and whether adjacent constraints slow deployment, and the customer question is whether this changes build sequencing, partner dependence, or the cost of scaling clusters across regions.

There is also a timing issue. In AI infrastructure, announcements often arrive before the hard parts are visible: interconnection queues, equipment lead times, operating approvals, financing conditions, and the practical work of matching customer demand to physical capacity.

For readers tracking this market, the useful lens is less about whether demand exists and more about where it can be served without delay. A small operational change can matter if it gives operators more flexibility, improves utilization, or exposes a bottleneck that had been hidden inside a broader growth story.

The next signal to watch is customer commitments, infrastructure readiness, and any signs that power, cooling, silicon supply, or permitting becomes the real bottleneck. The next test is whether delivery schedules, memory availability, and deployment readiness move together or start to diverge.