Anthropic Signs SpaceX Colossus 1 Deal For Major Claude Compute Expansion

The issue is no longer demand alone; it is whether the surrounding infrastructure is ready.

- StorageReview reported a development that could affect hyperscalers & cloud planning.

- The practical issue is whether demand can be converted into reliable capacity on schedule.

- Watch execution details, customer commitments, and any bottlenecks around power, cooling, silicon, or permitting.

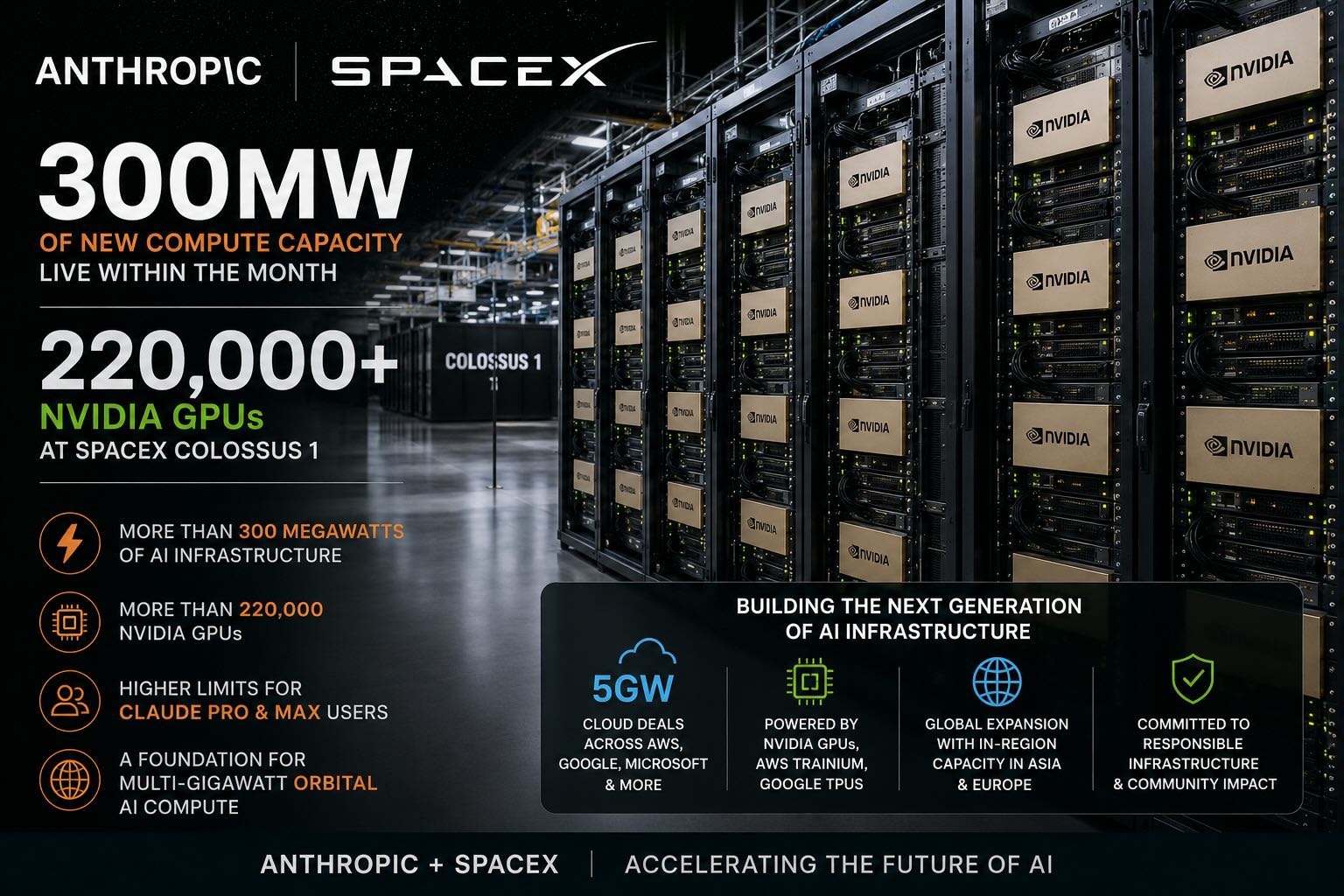

StorageReview reported: Anthropic’s new compute agreement with SpaceX gives the AI company access to all compute capacity at SpaceX’s Colossus 1 data center, adding more than 300 megawatts of capacity and more than 220,000 NVIDIA GPUs within the month. While the immediate impact is higher capacity for Claude users, the deal also stands out as a major utilization win for SpaceX’s AI infrastructure ambitions. Under the agreement, Anthropic will use all compute capacity at SpaceX’s Colossus 1 data center. The company says this gives it access to more than 300 megawatts of new capacity within the month, including more than 220,000 NVIDIA GPUs. There is also a broader strategic angle for SpaceX, as Industry chatter has suggested that AI data center capacity tied to Elon Musk’s companies, including xAI, may not have been fully utilized at certain points. Anthropic’s agreement gives SpaceX a major customer for Colossus 1 capacity, which could help demonstrate demand for large-scale AI infrastructure connected to Musk’s broader compute ecosystem. SpaceX has also been linked to future space-based data center ambitions, and Anthropic says it has expressed interest in partnering with SpaceX to develop multiple gigawatts of orbital AI compute capacity. No timeline or technical details were provided, but the agreement gives both companies a practical starting point for a much larger compute relationship. The Spac.

Read narrowly, this is one more item in the daily flow of infrastructure news. Read against the buildout cycle, it points to a more practical question for cloud infrastructure: can the operating system around compute keep up with demand? The constraint is not just chip supply. Advanced compute depends on packaging, memory, networking, power delivery, and the ability to land systems inside facilities that can actually run them at high utilization.

That makes the second-order detail more important than the announcement language. The underappreciated variable is deployment readiness across networking, power, and packaging, not just chip availability.

That matters for buyers because the useful capacity is the installed, cooled, powered cluster, not the purchase order. It also matters for suppliers because component shortages can shift bargaining power quickly across the stack.

The financial question is whether this improves pricing power, secures scarce capacity, or exposes execution risk that is still being discounted, the operating question is procurement timing, facility readiness, power access, and whether adjacent constraints slow deployment, and the customer question is whether this changes build sequencing, partner dependence, or the cost of scaling clusters across regions.

The market tends to price the demand story first and the delivery work later. That can hide the hardest parts of the buildout: grid queues, procurement windows, permitting, vendor capacity, and the coordination needed to turn a plan into a running site.

For a board focused on AI infrastructure, the item matters because it clarifies where leverage may sit. Sometimes that leverage belongs to chip suppliers or cloud platforms. In other cases it moves to utilities, landlords, financing partners, equipment vendors, or regulators that control the pace of deployment.

The next signal to watch is customer commitments, infrastructure readiness, and any signs that power, cooling, silicon supply, or permitting becomes the real bottleneck. The next test is whether delivery schedules, memory availability, and deployment readiness move together or start to diverge.