VMware Cloud Foundation 9.1 Positions Private Cloud as the Home for Enterprise AI

The issue is no longer demand alone; it is whether the surrounding infrastructure is ready.

- StorageReview reported a development that could affect hyperscalers & cloud planning.

- The practical issue is whether demand can be converted into reliable capacity on schedule.

- Watch execution details, customer commitments, and any bottlenecks around power, cooling, silicon, or permitting.

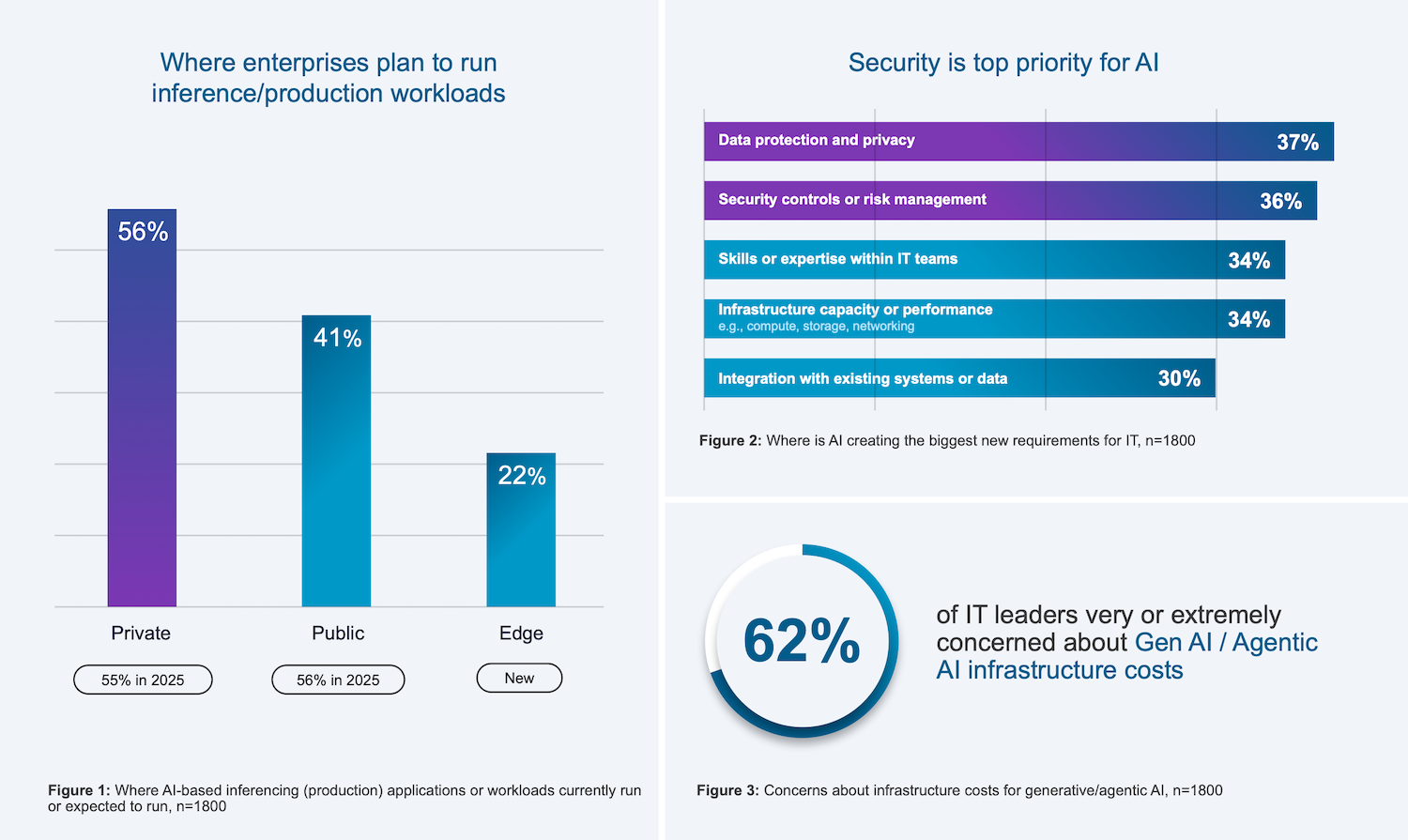

StorageReview reported: Broadcom announced VMware Cloud Foundation 9.1, positioning the platform as a private cloud foundation optimized for production AI workloads. The release focuses on tighter integration of AI and Kubernetes, expanded hardware support across AMD, Intel, and NVIDIA, and embedded security capabilities designed for enterprise AI deployments. The update targets organizations running inference and emerging agentic AI applications, with an emphasis on cost control, infrastructure flexibility, and data governance. Radius Tech compiled the Private Cloud Outlook 2026 data in partnership with Broadcom. The global survey included 1,800 IT decision-makers in enterprise organizations. The company also shared early findings from its upcoming Private Cloud Outlook 2026 report, indicating a continued shift toward private cloud for production AI. According to the preview data, 56% of organizations are running or planning to run inference workloads in private environments, compared to 41% in the public cloud, a decline year over year. Cost concerns remain a primary driver, with 62% of IT leaders citing generative AI infrastructure costs as a major issue. Additionally, 36% report new requirements around data protection, privacy, and risk management driven by AI adoption. Krish Prasad, senior vice president and general manager of VMware Cloud Foundation Division at Broadcom, highlighted three main.

Read narrowly, this is one more item in the daily flow of infrastructure news. Read against the buildout cycle, it points to a more practical question for cloud infrastructure: can the operating system around compute keep up with demand? The constraint is not just chip supply. Advanced compute depends on packaging, memory, networking, power delivery, and the ability to land systems inside facilities that can actually run them at high utilization.

That makes the second-order detail more important than the announcement language. The underappreciated variable is deployment readiness across networking, power, and packaging, not just chip availability.

That matters for buyers because the useful capacity is the installed, cooled, powered cluster, not the purchase order. It also matters for suppliers because component shortages can shift bargaining power quickly across the stack.

The financial question is whether this improves pricing power, secures scarce capacity, or exposes execution risk that is still being discounted, the operating question is procurement timing, facility readiness, power access, and whether adjacent constraints slow deployment, and the customer question is whether this changes build sequencing, partner dependence, or the cost of scaling clusters across regions.

The market tends to price the demand story first and the delivery work later. That can hide the hardest parts of the buildout: grid queues, procurement windows, permitting, vendor capacity, and the coordination needed to turn a plan into a running site.

For a board focused on AI infrastructure, the item matters because it clarifies where leverage may sit. Sometimes that leverage belongs to chip suppliers or cloud platforms. In other cases it moves to utilities, landlords, financing partners, equipment vendors, or regulators that control the pace of deployment.

The next signal to watch is customer commitments, infrastructure readiness, and any signs that power, cooling, silicon supply, or permitting becomes the real bottleneck. The next test is whether delivery schedules, memory availability, and deployment readiness move together or start to diverge.