Introducing Anthropic’s Claude Opus 4.7 model in Amazon Bedrock

Introducing Anthropic’s Claude Opus 4.7 model in Amazon Bedrock is less a verdict on software jobs than a reminder that AI-assisted coding still needs engineering judgment.

- Generative AI is making it easier for non-programmers and developers to get a first version of software running.

- The harder question is who reviews, secures, and maintains that code once it enters a real business.

- Watch whether companies pair faster prototyping with clear ownership, testing, and controls.

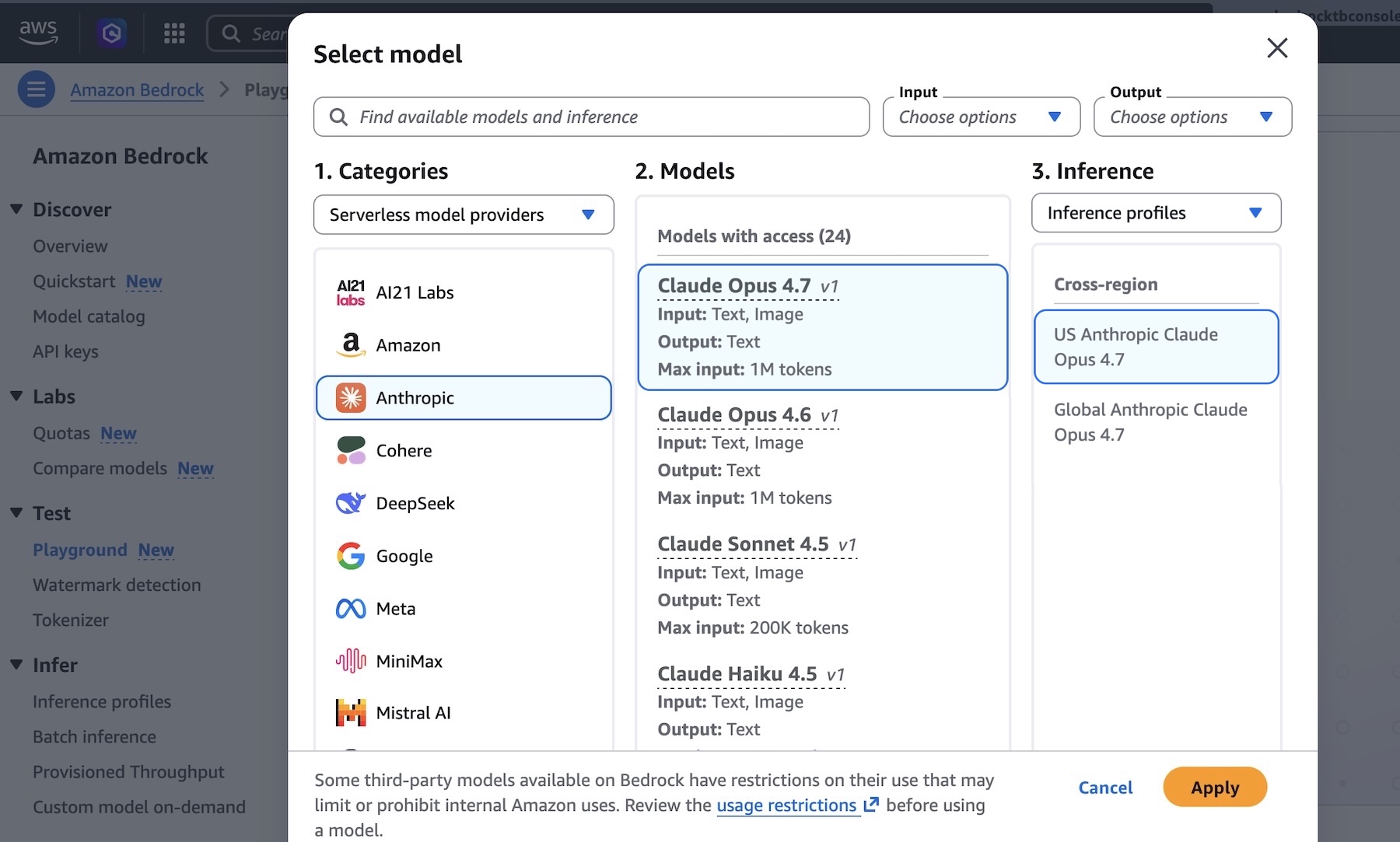

AWS News Blog reported: Today, we’re announcing Claude Opus 4.7 in Amazon Bedrock, Anthropic’s most intelligent Opus model for advancing performance across coding, long-running agents, and professional work. Claude Opus 4.7 is powered by Amazon Bedrock’s next generation inference engine, delivering enterprise-grade infrastructure for production workloads. Bedrock’s new inference engine has brand-new scheduling and scaling logic which dynamically allocates capacity to requests, improving availability particularly for steady-state workloads while making room for rapidly scaling services. It provides zero operator access—meaning customer prompts and responses are never visible to Anthropic or AWS operators—keeping sensitive data private. According to Anthropic, Claude Opus 4.7 model provides improvements across the workflows that teams run in production such as agentic coding, knowledge work, visual understanding,long-running tasks. Opus 4.7 works better through ambiguity, is more thorough in its problem solving, and follows instructions more precisely. The model is an upgrade from Opus 4.6 but may require prompting changes and harness tweaks to get the most out of the model. To learn more, visit Anthropic’s prompting guide. Claude Opus 4.7 model in action You can get started with Claude Opus 4.7 model in Amazon Bedrock console. Choose Playground under Test menu and choose Claude Opus 4.7 when you se.

Read narrowly, this is one more item in the daily flow of infrastructure news. Read against the buildout cycle, it points to a more practical question for cloud infrastructure: can the operating system around compute keep up with demand? The constraint is not only the price of electricity. It is the timing of grid access, the flexibility of large loads, and the ability of data center operators to behave less like passive consumers and more like active participants in the power system.

That makes the second-order detail more important than the announcement language. Power access and interconnection timing are likely to matter more than the announced demand signal itself.

For infrastructure teams, that makes power procurement and site selection part of the product roadmap. A campus can have customers, capital, and equipment lined up and still lose time if the grid connection, market rules, or operating model cannot absorb the load profile.

The financial question is whether this development improves pricing power, locks in scarce capacity, or exposes execution risk that the market may still be discounting, the operating question is procurement timing, facility readiness, network design, and the likelihood that adjacent constraints will slow realized deployment, and the customer question is whether this changes build sequencing, partner dependence, or the economics of scaling regions and clusters over the next few quarters.

The market tends to price the demand story first and the delivery work later. That can hide the hardest parts of the buildout: grid queues, procurement windows, permitting, vendor capacity, and the coordination needed to turn a plan into a running site.

For a board focused on AI infrastructure, the item matters because it clarifies where leverage may sit. Sometimes that leverage belongs to chip suppliers or cloud platforms. In other cases it moves to utilities, landlords, financing partners, equipment vendors, or regulators that control the pace of deployment.

The next signal to watch is the next disclosures on customer commitments, infrastructure readiness, and any evidence that power, cooling, silicon supply, or permitting becomes the real gating factor. The next test is whether this remains a narrow market experiment or becomes a normal tool for balancing AI demand with grid reliability.