AMD Instinct MI350P: Enterprise PCIe AI Inference Returns to Standard Servers

The issue is no longer demand alone; it is whether the surrounding infrastructure is ready.

- StorageReview reported a development that could affect hyperscalers & cloud planning.

- The practical issue is whether demand can be converted into reliable capacity on schedule.

- Watch execution details, customer commitments, and any bottlenecks around power, cooling, silicon, or permitting.

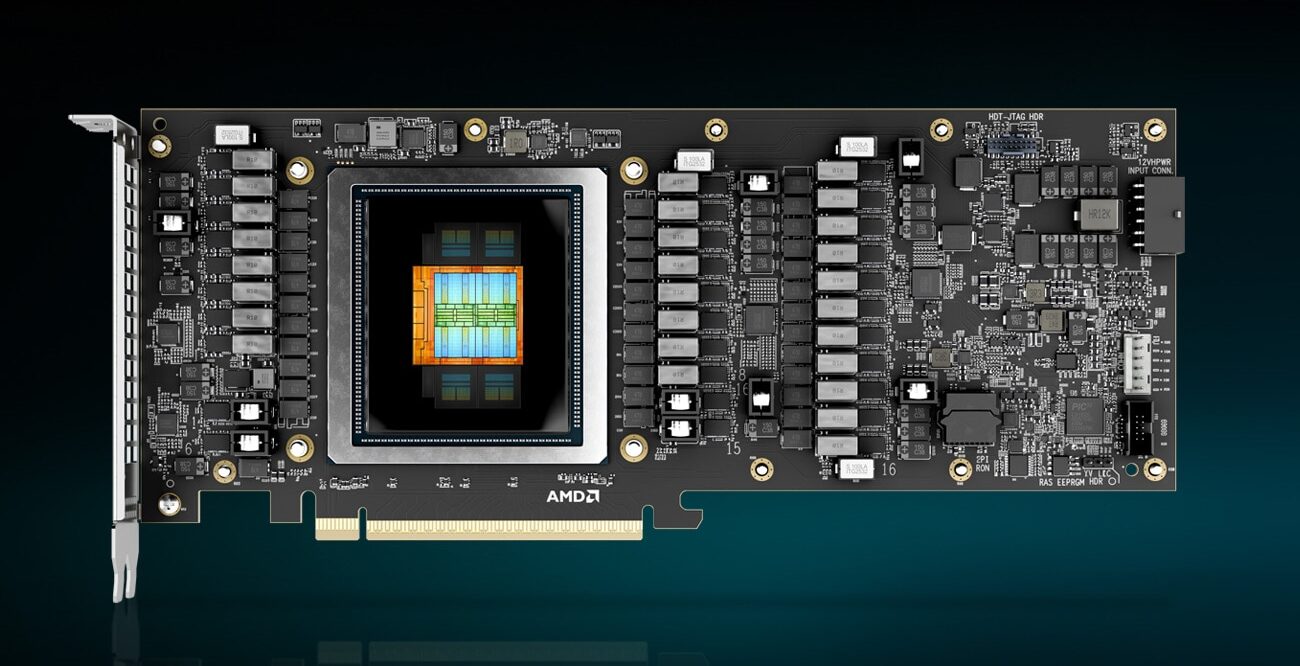

StorageReview reported: AMD has announced the Instinct MI350P, a PCIe accelerator aimed at enterprises that want on-premises AI inference without rebuilding their data center. The card is a dual-slot, full-height, full-length design built for standard air-cooled servers. It is also the first time in nearly four years that AMD has put a current-generation Instinct chip into a form factor that drops into a normal server. The PCIe Instinct line had effectively gone quiet after the MI210 shipped in early 2022. Every generation since (MI300X, MI325X, and the OAM MI350X) has been an OAM socketed module on a Universal Baseboard, requiring a purpose-built chassis with the power delivery and airflow for eight 1,000W-class accelerators in a single tray. That works for hyperscalers buying GPUs by the rack. It does not work for an enterprise that wants on-prem inference but cannot or will not commit to a custom AI rack. The MI350P fits that gap, and at the moment, NVIDIA does not have a flagship-tier server PCIe card in the same class, so AMD has the segment to itself for now. The MI350P is not a binned MI350X. AMD designed a smaller chip for it. The MI350X carries two I/O dies, each with four accelerator complex dies (XCDs), for a total of eight XCDs and 256 compute units. The MI350P has a single I/O die with four XCDs and 128 compute units, half the silicon, running at the same 2.2 GHz peak clock as its larger.

Read narrowly, this is one more item in the daily flow of infrastructure news. Read against the buildout cycle, it points to a more practical question for cloud infrastructure: can the operating system around compute keep up with demand? The constraint is not only the price of electricity. It is the timing of grid access, the flexibility of large loads, and the ability of data center operators to behave less like passive consumers and more like active participants in the power system.

That makes the second-order detail more important than the announcement language. Power access and interconnection timing are likely to matter more than the announced demand signal itself.

For infrastructure teams, that makes power procurement and site selection part of the product roadmap. A campus can have customers, capital, and equipment lined up and still lose time if the grid connection, market rules, or operating model cannot absorb the load profile.

The financial question is whether this improves pricing power, secures scarce capacity, or exposes execution risk that is still being discounted, the operating question is procurement timing, facility readiness, power access, and whether adjacent constraints slow deployment, and the customer question is whether this changes build sequencing, partner dependence, or the cost of scaling clusters across regions.

The market tends to price the demand story first and the delivery work later. That can hide the hardest parts of the buildout: grid queues, procurement windows, permitting, vendor capacity, and the coordination needed to turn a plan into a running site.

For a board focused on AI infrastructure, the item matters because it clarifies where leverage may sit. Sometimes that leverage belongs to chip suppliers or cloud platforms. In other cases it moves to utilities, landlords, financing partners, equipment vendors, or regulators that control the pace of deployment.

The next signal to watch is customer commitments, infrastructure readiness, and any signs that power, cooling, silicon supply, or permitting becomes the real bottleneck. The next test is whether this remains a narrow market experiment or becomes a normal tool for balancing AI demand with grid reliability.