Linux may be ending support for older network drivers due to influx of false AI-generated bug reports — maint

The development puts cloud infrastructure execution, not headline demand, at the center of the story.

- Toms Hardware reported a development that could affect hyperscalers & cloud planning.

- The practical issue is whether demand can be converted into reliable capacity on schedule.

- Watch execution details, customer commitments, and any bottlenecks around power, cooling, silicon, or permitting.

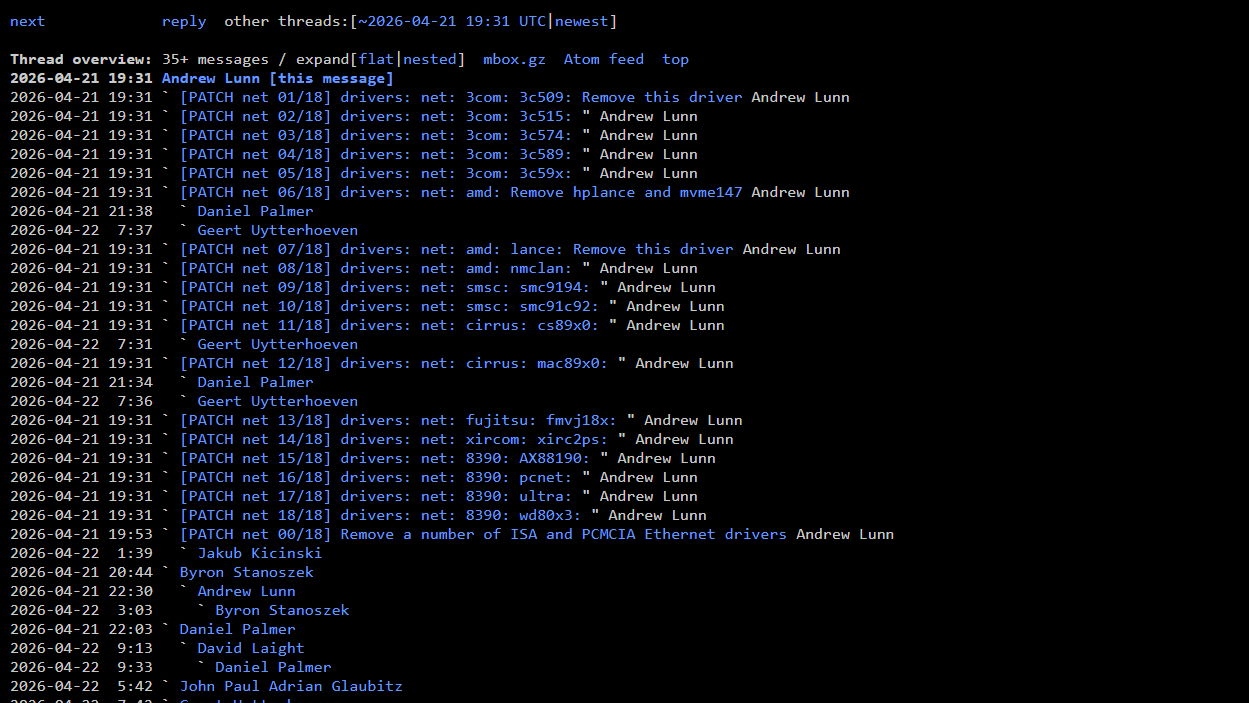

Toms Hardware reported: Linux kernel developers are reviewing a proposal to remove obsolete ISA and PCMCIA-era Ethernet drivers from the mainline kernel, citing rising maintenance burden from AI-driven bug reports and fuzzing. The change would cut around 27,000 lines of legacy code.

The important part is what the report says about cloud infrastructure as a working system, not just as a demand story. The constraint is execution. AI infrastructure demand is visible, but turning it into usable capacity requires power, equipment, permitting, supply-chain coordination, and customers that are ready to commit.

That is the reason the development deserves attention beyond the immediate headline. Execution speed, supply-chain coordination, and regional delivery risk remain more important than headline ambition.

That is why operators, cloud buyers, and investors are watching the operating details more closely than the headline. The winner is usually not the party with the loudest demand signal, but the one that removes bottlenecks soon enough to deliver capacity when customers need it.

The financial question is whether this development improves pricing power, locks in scarce capacity, or exposes execution risk that the market may still be discounting, the operating question is procurement timing, facility readiness, network design, and the likelihood that adjacent constraints will slow realized deployment, and the customer question is whether this changes build sequencing, partner dependence, or the economics of scaling regions and clusters over the next few quarters.

There is also a timing issue. In AI infrastructure, announcements often arrive before the hard parts are visible: interconnection queues, equipment lead times, operating approvals, financing conditions, and the practical work of matching customer demand to physical capacity.

For readers tracking this market, the useful lens is less about whether demand exists and more about where it can be served without delay. A small operational change can matter if it gives operators more flexibility, improves utilization, or exposes a bottleneck that had been hidden inside a broader growth story.

The next signal to watch is the next disclosures on customer commitments, infrastructure readiness, and any evidence that power, cooling, silicon supply, or permitting becomes the real gating factor. The next test is whether the project details support the ambition in the announcement.