Japan Bets $16 Billion to Propel Rapidus in Global AI Chip Race

The issue is no longer demand alone; it is whether the surrounding infrastructure is ready.

- Bloomberg Technology reported a development that could affect hyperscalers & cloud planning.

- The practical issue is whether demand can be converted into reliable capacity on schedule.

- Watch execution details, customer commitments, and any bottlenecks around power, cooling, silicon, or permitting.

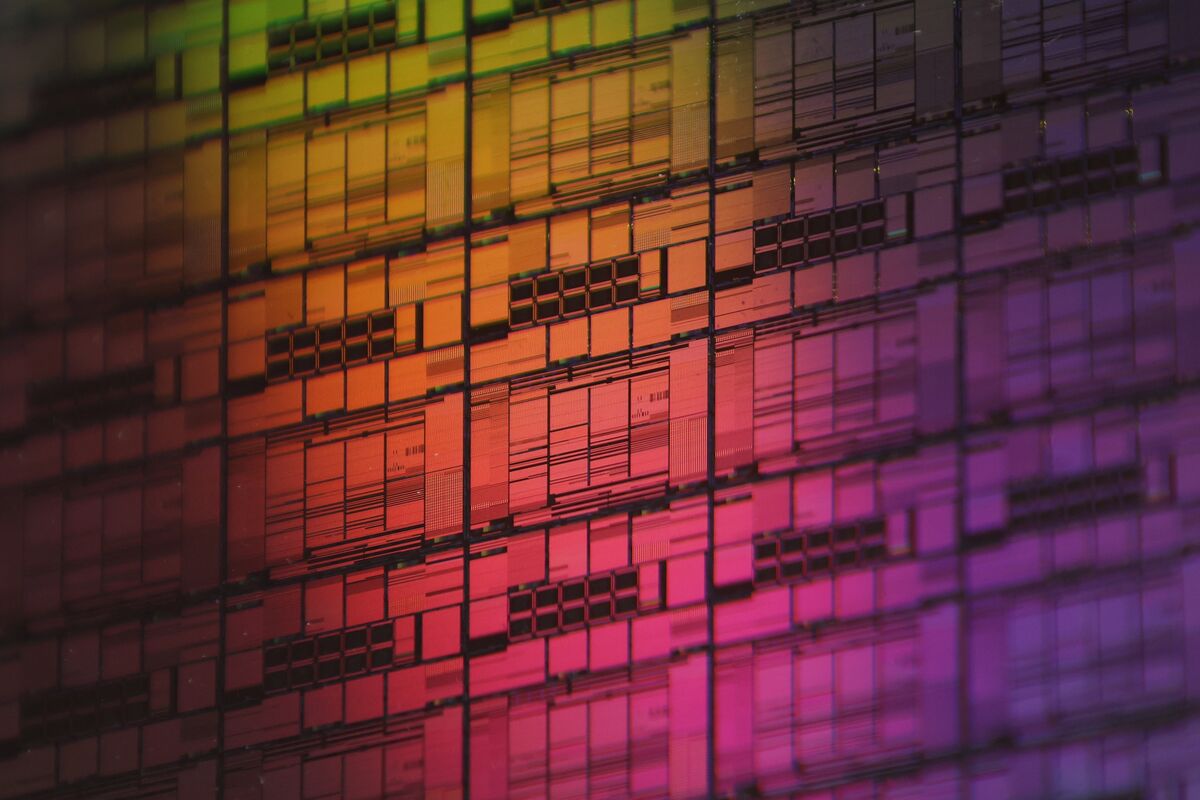

Bloomberg Technology reported: Email Link Gift Facebook Send a tip to our reporters Site feedback: Take our Survey New Window Facebook X LinkedIn Email Link Gift By Mari Kiyohara April 11, 2026 at 4:22 AM UTC Updated on April 11, 2026 at 11:25 AM UTC Bookmark Save Japan approved ¥631.5 billion ($4 billion) in additional subsidies to quicken Rapidus Corp. ’s entry into the high-stakes AI chipmaking arena, ramping up support for a project widely regarded as a long shot. The capital is intended to bankroll Rapidus’ work for IT firm Fujitsu Ltd., one of the initial clients that Tokyo hopes will get the signature endeavor off the ground. The new money raises the fees and investments that the government is injecting into the startup to ¥2.6 trillion ($16.3 billion) by the end of the current fiscal year to March 2027, according to a statement from the Ministry of Economy, Trade and Industry. An external committee inspected Rapidus’ foundry in Hokkaido in northern Japan, and signed off on its technological progress, the ministry said Saturday.

The story lands in a market where demand is already assumed. The more useful question is whether the supporting layer around cloud infrastructure is flexible enough to turn that demand into available capacity. The constraint is not just chip supply. Advanced compute depends on packaging, memory, networking, power delivery, and the ability to land systems inside facilities that can actually run them at high utilization.

The pressure point is timing. The underappreciated variable is deployment readiness across networking, power, and packaging, not just chip availability.

That matters for buyers because the useful capacity is the installed, cooled, powered cluster, not the purchase order. It also matters for suppliers because component shortages can shift bargaining power quickly across the stack.

The financial question is whether this development improves pricing power, locks in scarce capacity, or exposes execution risk that the market may still be discounting, the operating question is procurement timing, facility readiness, network design, and the likelihood that adjacent constraints will slow realized deployment, and the customer question is whether this changes build sequencing, partner dependence, or the economics of scaling regions and clusters over the next few quarters.

This is where AI infrastructure differs from ordinary software growth. Capacity has to be financed, permitted, powered, cooled, connected, staffed, and then sold into real workloads before the economics are visible.

The practical read is that infrastructure advantage is becoming more local and more operational. Two companies can chase the same AI demand and end up with very different outcomes if one has better access to power, more credible delivery dates, or a cleaner path through procurement and permitting.

The next signal to watch is the next disclosures on customer commitments, infrastructure readiness, and any evidence that power, cooling, silicon supply, or permitting becomes the real gating factor. The next test is whether delivery schedules, memory availability, and deployment readiness move together or start to diverge.