MinIO Introduces MemKV for Petabyte-Scale AI Inference Memory

The real test is whether power access can keep pace with AI infrastructure demand.

- StorageReview reported a development that could affect hyperscalers & cloud planning.

- The practical issue is whether demand can be converted into reliable capacity on schedule.

- Watch execution details, customer commitments, and any bottlenecks around power, cooling, silicon, or permitting.

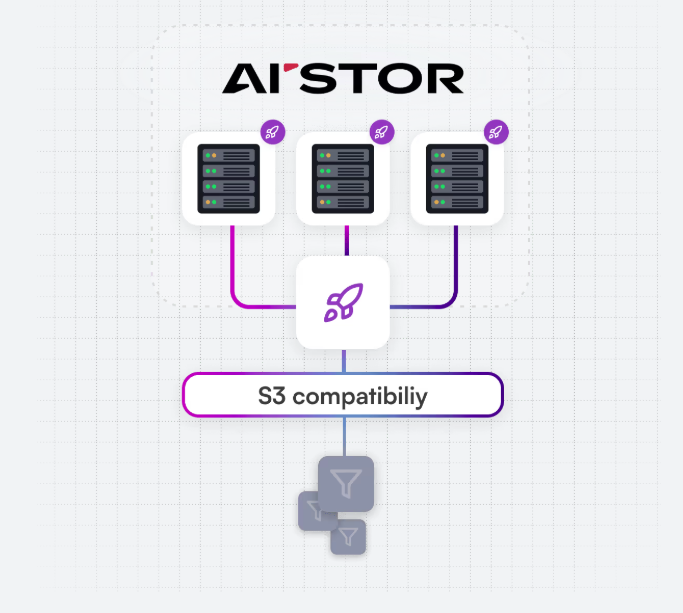

StorageReview reported: MinIO has announced MemKV, a context memory store designed to address a growing bottleneck in large-scale AI inference environments. Positioned as the second core component of the company's portfolio alongside AIStor, MemKV extends MinIO's data infrastructure into the memory tier, targeting persistent, shared context for agentic AI workloads operating across GPU clusters. As AI systems evolve from single-response interactions to multi-step reasoning and task execution, maintaining context across inference cycles has become critical. In current architectures, context is frequently lost due to limited capacity in GPU-adjacent memory tiers such as HBM and DRAM. This forces GPUs to recompute previously generated context, increasing latency, compute utilization, and energy consumption. MinIO characterizes this as a recompute tax that compounds at scale, particularly in hyperscale and cloud environments. MemKV is designed to mitigate this issue by providing a shared, persistent memory layer capable of microsecond retrieval at the petabyte scale. By maintaining context across inference operations, the platform reduces redundant computation and improves overall system efficiency. In internal benchmarks, MinIO reports improvements in time-to-first-token at production concurrency levels. In a representative deployment with 128 GPUs and 128K-token context windows, GPU utiliz.

The story lands in a market where demand is already assumed. The more useful question is whether the supporting layer around cloud infrastructure is flexible enough to turn that demand into available capacity. The constraint is not only the price of electricity. It is the timing of grid access, the flexibility of large loads, and the ability of data center operators to behave less like passive consumers and more like active participants in the power system.

The pressure point is timing. Power access and interconnection timing are likely to matter more than the announced demand signal itself.

For infrastructure teams, that makes power procurement and site selection part of the product roadmap. A campus can have customers, capital, and equipment lined up and still lose time if the grid connection, market rules, or operating model cannot absorb the load profile.

The financial question is whether this improves pricing power, secures scarce capacity, or exposes execution risk that is still being discounted, the operating question is procurement timing, facility readiness, power access, and whether adjacent constraints slow deployment, and the customer question is whether this changes build sequencing, partner dependence, or the cost of scaling clusters across regions.

This is where AI infrastructure differs from ordinary software growth. Capacity has to be financed, permitted, powered, cooled, connected, staffed, and then sold into real workloads before the economics are visible.

The practical read is that infrastructure advantage is becoming more local and more operational. Two companies can chase the same AI demand and end up with very different outcomes if one has better access to power, more credible delivery dates, or a cleaner path through procurement and permitting.

The next signal to watch is customer commitments, infrastructure readiness, and any signs that power, cooling, silicon supply, or permitting becomes the real bottleneck. The next test is whether this remains a narrow market experiment or becomes a normal tool for balancing AI demand with grid reliability.